GarmentNets:

Category-Level Pose Estimation for Garments via Canonical Space Shape Completion

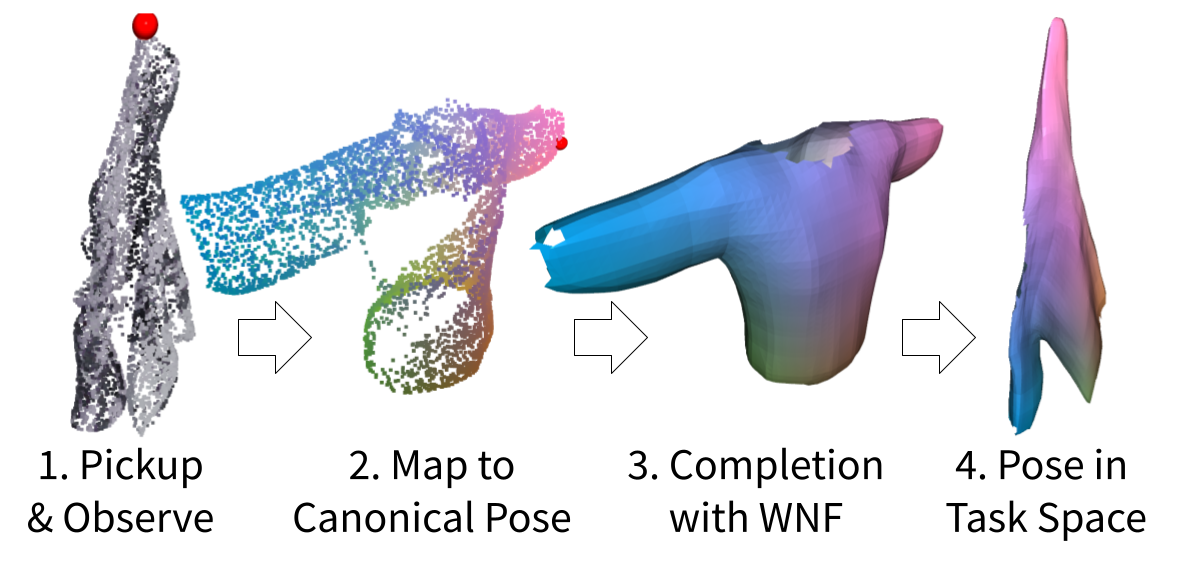

This paper tackles the task of category-level pose estimation for garments. With a near infinite degree of freedom, a garment's full configuration (i.e., poses) is often described by the per-vertex 3D locations of its entire 3D surface. However, garments are also commonly subject to extreme cases of self-occlusion, especially when folded or crumpled, making it challenging to perceive their full 3D surface. To address these challenges, we propose GarmentNets, where the key idea is to formulate the deformable object pose estimation problem as a shape completion task in the canonical space. This canonical space is defined across garments instances within a category, therefore, specifies the shared category-level pose. By mapping the observed partial surface to the canonical space and completing it in this space, the output representation describes the garment's full configuration using a complete 3D mesh with the per-vertex canonical coordinate label. To properly handle the thin 3D structure presented on garments, we proposed a novel 3D shape representation using the generalized winding number field. Experiments demonstrate that GarmentNets is able to generalize to unseen garment instances and achieve significantly better performance compared to alternative approaches.

Paper

Latest version: arXiv:2104.05177 [cs.CV] or here.

Poster for ICCV here

Code

Code and instructions to download data: Github

Team

Bibtex

@inproceedings{chi2021garmentnets,

title={GarmentNets: Category-Level Pose Estimation for Garments via Canonical Space Shape Completion},

author={Chi, Cheng and Song, Shuran},

booktitle={The IEEE International Conference on Computer Vision (ICCV)},

year={2021}

}

Technical Summary Video (with audio)

Acknowledgements

The authors would like to thank Eric Cousineau, Benjamin Burchfiel Naveen Kuppuswamy, and other researchers in Toyota Research Institute for their helpful feedback and fruitful discussions.We would also like to thank Huy Ha for data collection, Google for their donation of UR5 robots. This work was supported in part by the Amazon Research Award and the National Science Foundation under CMMI-2037101.

Contact

If you have any questions, please feel free to contact Cheng Chi